Assoc. Prof. Jason Anastasopoulos argues that AI is not merely a tool of efficiency, but a political force that may reconfigure both democratic governance and populist mobilization. In this ECPS interview, he warns that replacing bureaucrats with AI can erode “democratic legitimacy” and produce what he calls “automated majoritarianism,” where average cases are processed efficiently while minorities and outliers are disadvantaged. He also challenges the assumption that AI automatically strengthens authoritarian rule, showing instead how false positives, false negatives, and “threshold whiplash” can generate resistance within authoritarian systems. Most strikingly, he suggests that AI may transform populism itself: unlike earlier technological disruptions centered on manual labor, AI increasingly threatens “intellectual work and highly skilled labor,” potentially broadening the social base of anti-elite backlash and reshaping the future of political discontent.

Interview by Selcuk Gultasli

At a moment when artificial intelligence is increasingly presented as a transformative force in governance, public administration, and political control, Jason Anastasopoulos, Associate Professor of Public Administration and Policy at the University of Georgia, offers a far more cautious and analytically nuanced perspective. In this ECPS interview, he argues that the effects of AI cannot be understood through simplistic assumptions of either technological salvation or authoritarian omnipotence. Instead, AI emerges in his account as a politically embedded system whose consequences depend on data quality, institutional incentives, and the broader regime context in which it operates.

A central theme running through the interview is the challenge AI poses to conventional understandings of democratic legitimacy and representation. Anastasopoulos warns that “replacing bureaucrats with AI has the potential to erode democratic legitimacy and decrease the extent to which people not only perceive the legitimacy of the system but also actually receive fair outcomes.” This concern is rooted in his broader claim that algorithmic governance does not merely automate decisions; it subtly transforms the normative foundations of administration itself. Because AI systems rely on “data from the past and on statistical averages,” whereas human officials can apply individualized judgment, the shift toward automation risks creating what he calls “automated majoritarianism,” in which average cases are processed efficiently while minorities and outliers are systematically disadvantaged.

At the same time, Assoc. Prof. Anastasopoulos highlights the political implications of AI beyond democratic administration, particularly in relation to populism and authoritarianism. Against the widespread belief that AI necessarily strengthens authoritarian rule, he emphasizes the “autocrat’s calibration dilemma,” showing how false positives and false negatives generate what he terms “threshold whiplash.” Far from ensuring seamless control, AI can create backlash, misclassification, and resistance, even within highly monitored societies. In this respect, the interview complicates dystopian assumptions about authoritarian omniscience by showing how predictive technologies can also destabilize the very regimes that rely on them.

Most strikingly, however, Assoc. Prof. Anastasopoulos suggests that AI may reshape populist politics in new ways. Whereas earlier waves of technological disruption primarily displaced manual and industrial labor, contemporary AI increasingly threatens “intellectual work and highly skilled labor.” This shift, he argues, may transform the social basis of political discontent. Populist mobilization, long rooted in anti-elite appeals to economically dislocated working-class constituencies, may now expand to incorporate professional and knowledge-sector groups who find themselves newly exposed to technological precarity. In that sense, AI may transform populism not only by intensifying backlash against opaque governance, but also by mobilizing constituencies that have not historically stood at the center of populist revolt.

In sum, Assoc. Prof. Anastasopoulos’s reflections offer a sophisticated intervention into contemporary debates on AI and politics. His analysis underscores that AI is neither politically neutral nor institutionally self-executing. Rather, it is a force that can unsettle democratic legitimacy, complicate authoritarian control, and reconfigure the social terrain of populist mobilization. Far from being merely a tool of efficiency, AI may become a catalyst for profound political realignment.

Here is the edited version of our interview with Associate Professor Jason Anastasopoulos, revised slightly to improve clarity and flow.

AI Doesn’t Simply Strengthen Authoritarian Control

Professor Anastasopoulos, welcome. In “The Limits of Authoritarian AI,” you introduce the “autocrat’s calibration dilemma,” where predictive systems must tradeoff between false positives and false negatives. How does this structural constraint reshape prevailing assumptions that AI inherently strengthens authoritarian control?

Assoc. Prof. Jason Anastasopoulos: That’s a really good question. I think the common conception of AI is that it will strengthen authoritarian control in a linear fashion, and this makes sense to a certain extent. It is also true in the short run. One of the recurring themes in dystopian narratives is the emergence of a surveillance state in which authoritarian governments exert control over their populations through cameras, social credit systems, and similar technologies. To some extent, this does seem to be the case in the short term. In the long run, however, the use of AI is much more complicated.

This is because of the errors that it generates—namely, Type 1 and Type 2 errors. For readers who may not be familiar with these concepts, they refer to false positives and false negatives, respectively, and are commonly introduced in basic statistics. A Type 1 error occurs when someone is incorrectly identified as a positive case—for example, when a COVID test indicates that a person has the virus when they do not. A Type 2 error, by contrast, occurs when the test indicates that someone does not have the virus when they actually do.

All AI systems, as fundamentally predictive systems, operate under these same constraints. They can misclassify individuals—identifying someone as a threat to the regime when they are not or failing to identify someone who actually poses a risk. These errors carry political consequences, and managing those consequences becomes an inherent challenge for authoritarian regimes. Each type of error entails distinct political trade-offs, which I would be happy to elaborate on further.

Authoritarian Regimes Risk ‘Threshold Whiplash’ When Using AI for Control

Building on this dilemma, to what extent does the probabilistic nature of AI undermine the aspiration of authoritarian regimes to achieve total informational dominance and preemptive repression?

Assoc. Prof. Jason Anastasopoulos: This is where the political consequences of Type 1 and Type 2 errors come into play. This is where authoritarian regimes run into resistance when using AI in the long run, as opposed to the short run. In the short run, these tools are indeed tremendous for monitoring populations. Facial recognition systems can be linked to databases that identify people instantaneously. In China, for example, a social credit system is being developed that could potentially track movements and shape behaviors in ways consistent with regime preferences. But in the long run, the calibration dilemma that autocrats face becomes decisive.

This is something authoritarian regimes actually institutionalize. In China, bureaucracies exist to calibrate AI systems for these kinds of Type 1 and Type 2 errors. Let me outline the political issues that arise from these errors. For Type 1 errors, the biggest problem in an authoritarian context—where a leader is trying to predict who is risky—is that individuals are labeled as threats when they are not. When too many false positives are generated, opposition to the regime itself increases. In other words, you might have 100 individuals who are genuinely threatening, and the AI system identifies them—but it also identifies 100,000 others who are not. Those individuals, ironically, may become threats precisely because they are falsely labeled as such.

So, because of false positives, the regime creates more threats than it would have had otherwise. Authoritarian rule depends on a belief that compliance leads to tolerable outcomes—being left alone, not punished, not having one’s mobility restricted. Type 1 errors undermine this expectation, producing backlash and fueling social movements.

We have seen this in cases such as Zero-COVID policies and the Henan bank protests, which we discuss in the paper. Individuals were falsely labeled as COVID-positive to prevent them from protesting a banking scandal. This generated public outrage and forced the government to scale back. In other words, the use of AI produced the very instability it was meant to prevent.

For Type 2 errors, the problem is reversed. The regime faces real threats, and if AI systems fail to detect them, those threats can operate in the shadows. This dynamic produces what we call a cycle of “threshold whiplash.” Initially, regimes set thresholds low to maintain tight control, which increases Type 1 errors and triggers backlash. In response, they raise the threshold, which increases Type 2 errors, allowing real threats to go undetected.

At the same time, individuals alienated by false labeling may become politically active and organize against the regime. In this way, AI generates a cycle in which efforts at control inadvertently produce the very resistance the regime seeks to suppress.

Authoritarian Incentives to Report Stability Degrade AI from Within

Your work suggests that prediction systems are not merely technical tools, but political instruments embedded in institutional incentives. How do bureaucratic and party-level incentives distort AI outputs in authoritarian settings?

Assoc. Prof. Jason Anastasopoulos: That’s a really good question. The focus here is primarily on China, where regional bureaucratic leaders have incentives to report stability metrics to Beijing. There is a strong desire for Beijing to see that, across all regions within China, things are looking good—that conditions are stable.

What happens with AI systems, then, is that officials tend to downplay any activity identified by these systems that might suggest instability in a region. As a result, when such distorted data is fed into the new AI systems being developed, it creates a significant gap between on-the-ground realities and what the AI system reports, ultimately degrading the quality of the system itself. In this way, bureaucratic incentives to report stability end up undermining AI performance over time, as these systems are trained on data that is simply of low quality.

AI Decision-Making Can Erode Both Perceived and Actual Fairness

In your research on democratic administration, you argue that replacing human discretion with AI risks eroding accountability and reason-giving. How should we theorize the relationship between algorithmic governance and democratic legitimacy?

Assoc. Prof. Jason Anastasopoulos: One of my papers on the problem of replacing bureaucratic discretion with AI identifies a recent trend in many places; some of it is aspirational, and some of it has actually been implemented. The trend is that many regimes, not just authoritarian regimes but democratic countries as well, are seeking to replace bureaucratic discretion, and bureaucrats more generally, with AI systems.

For example, Keir Starmer is one of the figures who is very interested in doing so in the UK. Widodo in Indonesia has actually replaced a few levels of the bureaucracy with AI systems. One of the problems that the paper identifies is that when you replace bureaucratic discretion with AI systems, you remove some of the important safeguards that exist for democratic governance.

Specifically, AI systems have this issue where they do not think like human beings—that is the fundamental problem. Democratic legitimacy, in many ways, is based on the idea that another human being will review your case and be able to reason through whatever decision needs to be made by the state in your particular situation. What I argue in that paper is that there are certain types of decisions—decisions relating to rights, and decisions involving very important issues where someone’s rights could be taken away—that should not be delegated to automated systems. This is because the idea of justice and democracy itself depends on a human being assessing your case at an individual level and applying human judgment in a way that would be deemed fair both theoretically, from a philosophical perspective, and in terms of the perceptions of those being judged.

So, a lot of it comes down to the fact that replacing bureaucrats with AI has the potential to erode democratic legitimacy and decrease the extent to which people not only perceive the legitimacy of the system but also actually receive fair outcomes.

Another problem I identify in that paper is a technical one. I have training in machine learning and statistics, as well as in political philosophy, and I try to understand how these systems work and what their technical implications are. One of the problems with AI, and with any prediction system, is that it does a very good job of assessing the average case, but a very poor job of assessing cases that would be considered edge cases. If the circumstances that a person brings to an AI system are very unusual, the system is not going to be able to provide a good prediction.

As a result, you have what I call automated majoritarianism, where the AI system performs well for most people, but for minority groups and for individuals whose cases fall outside the norm, it performs very poorly. This can ultimately alienate a large segment of the population. These are some of the key issues I identify regarding the risks of replacing bureaucratic discretion with AI.

Automated Majoritarianism Leaves Minority Cases Behind

If democratic governance depends on individualized judgment and justification, can AI ever be reconciled with these normative commitments, or does it fundamentally reconfigure the meaning of administrative fairness?

Assoc. Prof. Jason Anastasopoulos: I think it actually does end up fundamentally reconfiguring the meaning of administrative fairness, and it does so in a way that is subtle and not very obvious. A lot of it, again, comes down to how AI systems make decisions versus how humans make decisions.

Humans make decisions based on their experience and their adherence to norms that are either embedded in institutions or exist in society. Whereas AI systems simply make decisions based on data from the past and on statistical averages. So, with a human being, you get an individualized decision, whereas with an AI system, you get a decision based on aggregate data.

That has implications for the future of administrative fairness, because the types of decisions made by AI systems, given how they function, are fundamentally different from those made by humans. How those decisions differ will depend on the circumstances to a certain extent. But we have already seen, for example, in cases from the criminal justice system, that AI systems, when they try to predict whether someone is likely to be a recidivist, can produce problematic outcomes. There is a system called the COMPAS.

This is not really an AI system per se; it is more of a machine learning algorithm, although most AI systems are based on machine learning to some extent. What the COMPAS system does is to make predictions about who would be considered at high risk of recidivism in the future. Imagine someone is arrested, their data is collected, and it is fed into this algorithm. The algorithm then predicts whether that person is risky, on a scale from 1 to 10, and this affects how they are treated within the criminal justice system. If they are predicted to be high risk, they may receive a harsher sentence and be treated more punitively; if they are predicted to be low risk, they are more likely to receive leniency.

What some authors at ProPublica found in a 2016 study was that these systems generated a much higher false positive rate for African American offenders compared to white offenders. In other words, they predicted that Black offenders were more likely to be a future risk even when they were not. This is what the well-known ProPublica article “Machine Bias”demonstrated.

In that case, it showed that AI systems can perpetuate biases into the future. They can create a situation where past discrimination becomes embedded in the criminal justice system, and once that happens, it is much more difficult to correct than with human decision-makers. With humans, you can intervene more directly—you can audit decisions or remove individuals—but with AI systems, you would have to change the entire system, including vendors and underlying models, which is far more complex.

So, these are some of the ways in which AI can reshape our understanding of administrative fairness. We will need to develop systems to audit AI in order to prevent bias, and we will have to continually ensure that these systems do not embed biases that could create long-term unfair outcomes for minority groups and others whose lives are affected by AI-driven decisions.

AI Should Inform Decisions, but Humans Must Remain in the Loop

You propose a “centaur model” where AI complements rather than replaces human decision-makers. What institutional safeguards are necessary to prevent this hybrid model from drifting toward de facto automation and accountability erosion?

Assoc. Prof. Jason Anastasopoulos: The idea behind the Centaur model is pretty simple. We need to ensure that when really important decisions are being made within government—decisions that can affect people’s lives and relate to issues of fairness or justice—there is always a human decision-maker in the loop. An AI system can be good at making predictions, but it should only be used as one piece of information within a broader file that a human decision-maker can draw upon.

The problem with this kind of Centaur model, however, is that it runs up against the incentives many governments have to cut costs. This is especially true at the state and local levels in the United States, and also for lower-level governments in Europe and elsewhere, where there are strong incentives to automate decisions.

What may ultimately prevent the Centaur model from being implemented—even though I think it is a good model—is the political economy of governance. A system that combines human judgment with AI could produce decisions that are both fairer and more just than those made by humans alone, who have biases, or by AI systems alone, which come with their own set of problems.

But these advantages may be outweighed by structural pressures. If there is insufficient tax revenue, sustained pressure to cut costs, and a broader cultural disposition—especially in the United States—that views bureaucrats as unnecessary or ineffective, then populist demands to reduce administrative capacity may lead to full automation. In such a scenario, the Centaur model would not take hold.

Instead, you could end up with layers of bureaucracy fully delegated to AI, which introduces its own risks. In that sense, the key issue is public pressure to shrink bureaucracies—something we have seen in various reform movements—combined with governments’ ongoing efforts to reduce costs. Together, these dynamics can push systems toward automated governance rather than hybrid models, and that is something people need to be aware of.

Addressing this requires a broader cultural shift. People need to understand that bureaucrats are not simply obstacles—such as those encountered at the Department of Motor Vehicles—but are integral to ensuring fairness and accountability in governance. Without that shift, we risk moving toward fully automated systems that may replicate the flaws of bureaucracies while simply making decisions faster, not better. That is the main concern I have.

AI Can Centralize Power by Aligning Decisions More Closely with Political Leaders

Your work on delegation highlights how authority is structured through constraints and discretion. How does the delegation of decision-making authority to AI systems alter classic principal–agent problems in democratic governance?

Assoc. Prof. Jason Anastasopoulos: That’s a really good question. The way in which the delegation of authority to AI systems alters the classical problem is the following. The traditional principal–agent problem between bureaucracies and higher levels of authority is that, say in the United States, Congress wants a law passed. They pass the law and then expect it to be implemented in a way that is consistent with their intentions.

However, members of Congress and other elected leaders often lack the expertise required to implement laws themselves. For example, in the case of environmental legislation, they do not have the technical knowledge to determine how regulations should be applied in practice. As a result, they delegate this authority to expert bureaucrats, such as those in the EPA, who are responsible for implementation. The principal–agent problem arises because bureaucrats may have preferences that differ from those of elected leaders, meaning that delegation can produce outcomes that do not fully align with the preferences of those who delegated the authority.

In theory, AI could mitigate this problem. Elected leaders could design and select AI systems that align more closely with their own preferences, whether ideological or pragmatic. From the perspective of higher-level officials, AI systems can therefore be appealing, as they may replace bureaucrats who exercise independent discretion and might make decisions that leaders do not favor.

However, I think this is problematic from the public’s perspective. It leads to greater centralization of power and reduces discretion at the ground level. Bureaucrats often possess forms of expertise that elected leaders simply do not have and replacing that expertise with AI systems could introduce significant risks. Laws might not be implemented correctly, and outcomes might reflect not the interests of the public, but rather the preferences of elected leaders—or even the interests of the vendors who design the AI systems. This is where a new kind of principal–agent problem can emerge.

Perceived Unfair AI Decisions Can Fuel Populist Backlash

In the context of populism, how might the increasing use of AI in governance deepen representation gaps, particularly if citizens perceive decisions as opaque, impersonal, or technocratically imposed?

Assoc. Prof. Jason Anastasopoulos: I think that’s a real problem, and much of it comes down to the idea of backlash that I discuss in my paper on “The Limits of Authoritarian AI” with my co-author, Jason Lian.

If people perceive that AI systems are making decisions that are unfair, the resentment and backlash this generates can fuel an increase in populist movements and a desire to remove those who rely on AI systems but are not populists. That is one key risk I see emerging.

AI can certainly increase support for populist leaders. Such leaders are often somewhat anti-technology and frequently campaign on anti-technology platforms. If AI-based decisions generate sufficient backlash, this can provide them with powerful political fuel. In that context, we could see a sharp rise in support for populist leaders as a means of rolling back the system to a time before AI systems were producing decisions perceived as unfair.

Technological Displacement Expands the Social Base of Populism

Your research on technological change and populism suggests that economic disruption can fuel political discontent. How might AI-driven labor displacement interact with democratic backsliding and the rise of populist movements?

Assoc. Prof. Jason Anastasopoulos: There’s a lot of research on this, which finds that populists often draw on the idea that technology—especially automation—will replace people and take their jobs away. This is something we’ve seen since in the beginning of the Industrial Revolution. The Luddites in England were, of course, a well-known populist movement that relied on an anti-technology stance.

The Luddite movement emerged in response to the invention of the steam engine, which displaced large amounts of guild labor in textile production. Whenever there is labor displacement due to technological change, there is almost certainly backlash from those who are unemployed or otherwise disaffected by these new automation systems.

In that sense, AI is no different. It gives populist leaders something to point to, allowing them to claim that they will provide solutions to AI-driven displacement. But in practice, when they are elected, they often fail to deliver those solutions. Instead, they may cooperate with those who develop AI systems and even promote their expansion.

Nevertheless, this remains a powerful and enduring populist position. Historically, populist leaders promise to address the consequences of technological change, yet technological progress continues regardless. Still, their ability to mobilize those affected by labor displacement is likely to grow as more jobs are disrupted.

What is particularly interesting about AI, compared to earlier technologies like the steam engine, is that it is displacing not only manual labor but also intellectual work and highly skilled labor. As a result, the nature of populist and social movements may evolve, as populists begin to incorporate these groups into their constituencies rather than focusing primarily on the working class. This could become an important new dimension of populist politics moving forward.

Distrust of Bureaucracy Could Enable ‘Algorithmic Populism’

To what extent does AI governance risk creating a new form of “algorithmic populism,” where political actors leverage automated systems to claim efficiency while obscuring responsibility?

Assoc. Prof. Jason Anastasopoulos: That’s exactly the problem I identified before. Could you explain what you mean by algorithmic populism more specifically? Political leaders or actors leveraging automated systems to claim efficiency while obscuring responsibility.

That’s the general problem with AI. It’s one of the key tensions. I’m not entirely sure about the idea of algorithmic populism in general, but one condition that could give rise to it is, especially in cultures like the United States where there is a deep distrust of bureaucracies, a situation in which AI systems are perceived as being better than human bureaucrats.

In those cases, it would be easy for a political actor—an “algorithmic populist,” as you put it—to accelerate the replacement of bureaucrats with AI in government, which would again lead to many of the problems I discussed earlier. And some figures—Donald Trump, for example, who could be considered a populist—might even be seen as algorithmic populists to a certain extent, in that they promote technology and advance a strong AI agenda.

In such situations, you create a scenario where you end up with the same problems associated with AI that I mentioned earlier, but the process continues to advance. I don’t know exactly what the future would look like in terms of how an algorithmic populist movement might develop, but it is an interesting idea to consider.

Data Quality Will Determine Whether AI Supports Democracy or Control

And lastly, Professor Anastasopoulos, looking ahead, do you see AI as ultimately stabilizing or destabilizing democratic systems—and what key variables will determine whether it becomes a tool of democratic renewal or authoritarian entrenchment?

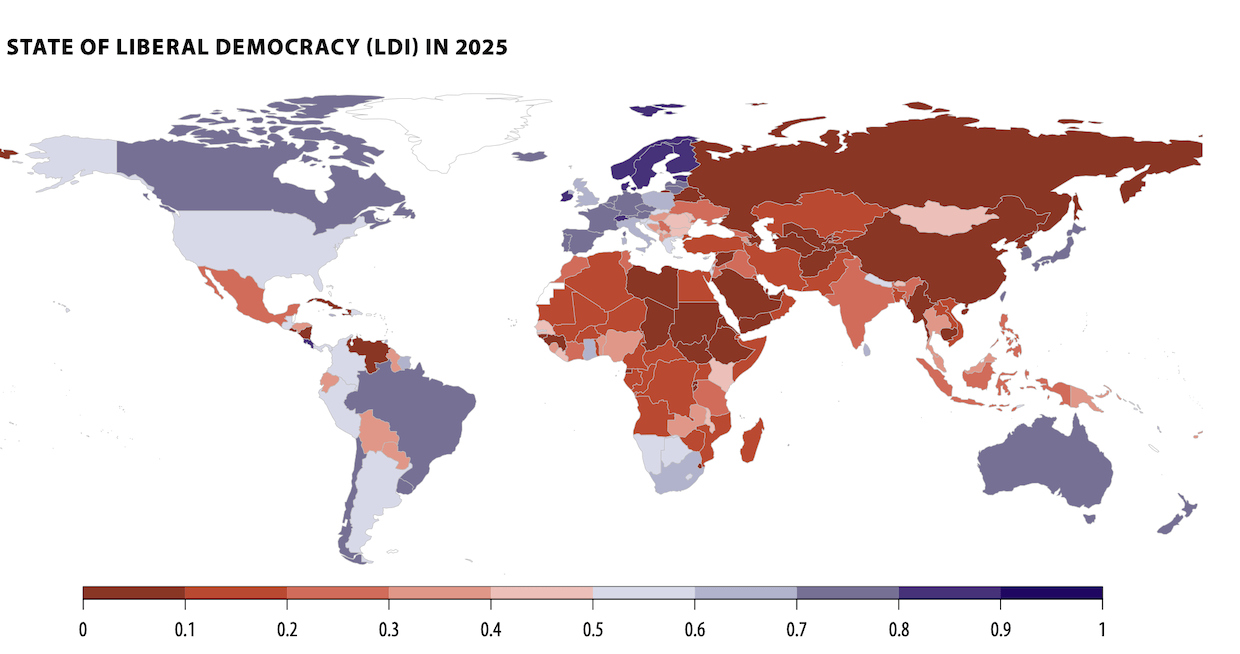

Assoc. Prof. Jason Anastasopoulos: I’m actually pretty hopeful about AI and its effect on democracy. I think it’s going to have two effects in general: one within democratic systems and the other within authoritarian systems.

I think a lot of it comes down to data quality. In democratic systems, AI can do a very good job of helping decision-makers make fairer, more just, and more efficient decisions. That’s because, within democratic systems, the information fed into AI systems comes from a range of democratic processes—deliberation, free speech, and so on. As a result, the quality of AI systems is very high when they are used to further democratic principles and support democratic rule.

However, in authoritarian systems—and this is something I discuss in “The Limits of Authoritarian AI”—authoritarian regimes seek to use AI to control their populations. The fundamental problem they encounter is one of information. This problem relates directly to the fact that when people are being monitored, they change their behavior and hide their preferences. As a result, the information that feeds into AI systems ends up being of much lower quality in authoritarian regimes than in democratic ones. I believe this tends to further destabilize authoritarian regimes as they attempt to tighten control through AI systems and encounter the kind of threshold whiplash I mentioned earlier. Over time, authoritarian regimes may come to realize that AI tools are not the panacea they may have expected. That realization could open the door for social democratic movements within authoritarian regimes to take advantage of the instability created by AI.

In sum, for democratic nations, as long as we avoid a situation in which we eliminate all layers of government and replace them with AI, it can be a stabilizing force. In contrast, in authoritarian regimes, it is likely to be destabilizing—at least temporarily—and may eventually push those systems toward greater democratization if they continue to rely on AI. They might, of course, decide to abandon AI systems and revert to older forms of authoritarian control, but I don’t think that is very feasible in the modern world. Instead, what we may see is a gradual broadening of democracy globally as AI systems are adopted for different purposes.